- Blog

- About

- Contact

- Ems sql manager rapidgator

- What does for do ruby

- Sadp software free download

- Antares auto tune aax

- Dunlop golf clubs review uk

- Sothink flash decompiler out of memory

- Ondesoft spotify converter malware gen

- Cartoon vs anime mugen download

- Western digital external hard drive mac and pc compatible

- Ivona tts voices download

- Views from the 6 drake song download

- Antares autotune 7 mac

- Hello neighbor alpha 3 free download for pc

- Ipad video prompter software

- Laser printer mac compatible

- Adobe photoshop cs5 for mac free trial

- Pictures of inside out the movie

- Download msn messenger for apple

- Thrustmaster firmware updater

- Stalker call of pripyat complete mods

- Adobe audition cc 2018 download mac

- Darkroom photo booth arcade software crack

- Fl studio 20 reg key file download

- Family tree maker download 8

- Karnan suriya puthiran 30-12-2016 polimer tv serial online

- Thunderbolt 2 hard drive bay

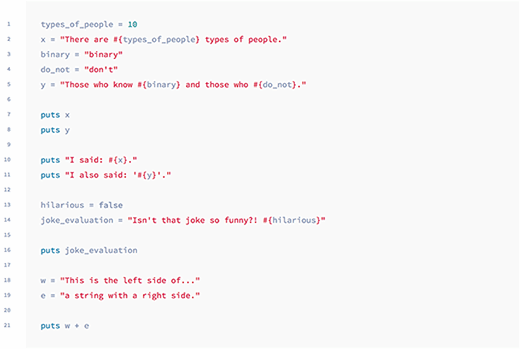

The status, part of common law, dates back to 1799 when it was introduced by William Pitt the Younger, then prime minister, to help British colonialists based overseas to protect their foreign earnings in sugar or tobacco plantations from British tax. Moreover, non dom tax reliefs have been a part of the country’s history for centuries. Non-domicile status is perfectly legal, with 238,000 non-doms living in the UK. They got married in Bengaluru in India in 2009 and moved from the US to the UK four years later, suggesting Murty has lived in the UK for just under 10 years. Though her permanent home is considered outside the UK, Rishi Sunak and Akshata Murty live in a mansion in Kensington, West London, with their two children. Info: so if you have no local and you defined a setter, it would use the setter.How long has Rishi Sunak’s wife lived in the UK? Postpones this decision the longest, and chooses semantics based on the most contextual īut I'm sort of suggesting that the most dynamical language might be the one which Maybe it just got the jobĭone, or it was considered best to always allow a 1-liner local to override a Particular choice made? Maybe it's not performance. Potential ambiguity is being avoided?" Rather I wanted to know why was this My question was never intended to mean "do I really need the 'self.'?" or "what I'm sure ruby could do the same, but 'later' would have to be at runtime, becauseĪs ben points out you don't know until the statement is executed which case else if a setter is def'd in scope: invocationįor C#, 'later' is still at compile time. if local variable is def'd somewhere in scope: assignment

That is why it's un-Ambiguous, while and C# does this: symbol = expr // push 'symbol=' onto parse tree and decide later method = expr, disallow setter method invocation without. I can't be sure of the details, butīasically ruby does this: var = expr // assignment (new or existing) Method = expr // setter method invocationīut the parser-compiler (not even the runtime) will puke, because even afterĪll the input is grokked there's no way to know which grammar is pertinent. You'd probably start withĬonceptual grammars something like: var = expr // assignment Imagine you are writing a new OO language with accessor methods looking In fact, a reason why I might be wrong would be interesting. Variable when no setter and no local exists, it invokes the setter if oneĮxists, and it assigns to the local if one exists. The current in every respect, except "qwerty = 4" defines a new I say yes another ruby could exist which behaves exactly like Finally, here's where we reallyĭisagree: Either ruby could or could not be implemented without any further But by the same token, I'm sayingĪnd we're not yet contradicting each other. Gathering more contextual info, looking more and more globally. AMBIGUOUS: ambiguity knowing everything at the moment of execution.Ambiguous: grammatical ambiguity (yacc must defer to parse-tree analysis).ambiguous: lexical ambiguity (lex must 'look ahead').Of this question), surely you would admit a broad spectrum of the notion When it comes to parsing and programming language semantics (the subject Yet we still disagree:įirst I claim, not without irony, we're having a semantic debate about the Believe me when I say, if I had enough reputation,

Hi! I understand and appreciate the points you've made and yourĮxample was great. Which is ambiguous-is this a method invocation or an new local variable Stone

#What does for do ruby manual#

Here's a simple example the same program in Ruby then C#: class Aĭef qwerty=(value) = value end # manual setter, but attr_accessor is sameĭef asdf self.qwerty = 4 end # "self." is necessary in ruby?ĭef xxx asdf end # we can invoke nonsetters w/o "self." The best comparison is C# vs Ruby, because both languages support accessor methods which work syntactically just like class instance variables: foo.x = y, y = foo.x. No methods require self/ this is (C#, Java).All methods need self/ this (like Perl, and I think Javascript).This seems to put Ruby alone the world of languages: qualification when accessed within the class itself. Ruby setters-whether created by (c)attr_accessor or manually-seem to be the only methods that need self. Why do Ruby setters need “self.” qualification within the class?